What Went Wrong with Polling in the 2016 Election?

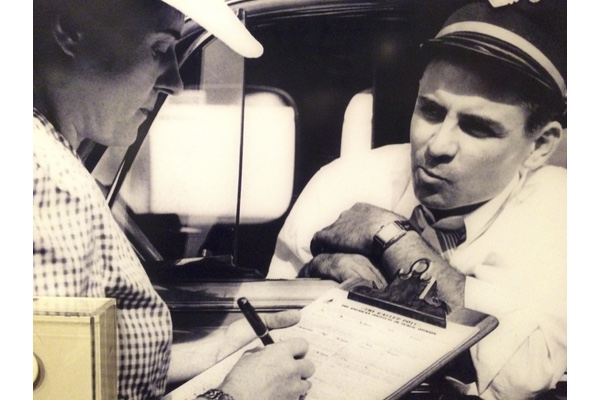

Gallup poll, 1940s (Smithsonian)

Political polling today hardly resembles the use of a scientific method to capture public opinion the way the field’s founding fathers, George Gallup and Elmo Roper, intended. Polling results are routinely used by the media and the public to predict election outcomes. Political candidates use polls to determine their likeability among voters, and to develop strategies to persuade citizens to adopt their policies.

The recent polling disaster in Election 2016 has sparked a reckoning among the field’s practitioners. Courtney Kennedy, director of survey research at the Pew Research Center, told the Associated Press that Donald Trump’s win “was an important polling miss” with “systematic overrepresentation of [Hillary] Clinton’s support and underrepresentation for Trump’s.”

The American Association for Public Opinion Research has now convened a committee to study what went wrong in the election’s polling, but we have been here before. Concerns about the power of polls to influence elections have been voiced since Gallup called polling the “pulse of democracy” in 1940. When Gallup polled a demographically representative sample to correctly predict Franklin Delano Roosevelt’s 1936 reelection win, he considered polls to be an expression of what the “average American” thought, not a prediction of election results. Gallup positioned himself a scientist, and he regularly proclaimed publicly that he did not vote. “We have not the slightest interest in who wins an election,” he said. “All we want to do is be right.”

Roper agreed. The purpose of polling was to determine the “people’s voice,” not sway it. Polls would be the way the opinions of everyday Americans would be communicated to people in positions of power.

Critics were quick to point out that polling results could influence independent voters, a charge that Gallup dismissed. He argued that while people think “the American people behave like sheep,” there was “not one bit of scientific evidence” to support such a conclusion.

Gallup and Roper failed to consider how polling could be used to shape the public narrative about what presidential campaigns wanted Americans to believe. Author Sarah Igo, in her book The Averaged American, argues that the pollsters ignored the possibility that politicians “would rely on opinion research to fashion targeted messages for public consumption as much as to guide policy making.”

Worse, she wrote, pollsters had to make subjective decisions about how to translate the data into easily understood facts and figures that the public and press wanted. The experts who conducted the polls became the arbiters of what constituted the people’s voice.

When FDR became an early adopter of polling data, he studied the polls not to learn what the public thought or wanted as Gallup intended, but to learn how to influence and persuade the public to accept his policies. This trend continued over subsequent presidential administrations. A study of polling by the Kennedy, Johnson, Nixon and Reagan administrations shows the shift in polling from a desire to understand the public’s policy preferences to the use of polling to determine the president’s public image and personal appeal.

At times, even the pollsters disagreed on how to interpret the data. A public spat between two Democratic pollsters about what former President Bill Clinton’s 1996 re-election victory meant left the Democratic party wondering what path it should follow going forward. Stick to centrist positions, as pollster Mark Penn said at the time? Or stay to the left by defending traditional Democratic programs like Medicare, as pollster Stanley B. Greenberg proposed?

The danger to America’s democratic process happens when people draw conclusions from polling data that are beyond the scope of what polls are designed to reveal. Polls capture public opinion among a segment of the population about a particular issue at one moment in time. Even if the polls get the popular vote right as they did for Clinton, they are not designed to reflect Electoral College wins and losses. “National polls don’t usually show Electoral College vote counts, and don’t often maintain the granularity to make the kind of state-by-state predictions to make those projections, so their usefulness even in aggregate to forecast elections is limited,” an article in the Atlantic said.

There are also weaknesses in the polling process itself. Given the prevalence of mobile phones, pollsters now face obstacles trying to reach enough people to gather a representative sample. Accuracy in survey responses is another issue. Voters may respond in ways that make themselves look good in the eyes of others instead of reporting what they will actually do. For example, survey respondents may say they intend to vote because it is socially desirable to do so even if they have no intention of voting.

In the months leading up to the election, the Trump campaign warned that the national polls could be wrong, but it was easy for the media to dismiss that as nothing more than political spin. On August 22, 2016, Trump’s campaign manager, Kellyanne Conway, a pollster by training, referred to an “undercover Trump voter” on the U.K.’s Channel 4 News that was not being reflected accurately in the polls because of social desirability. Conway said that Trump performed “consistently better” in online polls where a pollster is not talking to the voter directly because “it’s become socially desirable, if you’re a college educated person in the United States of America, to say that you’re against Donald Trump.”

On the O’Reilly Factor on August 30, 2016, Conway also questioned how well traditional polling methods can capture an “unconventional candidate” like Trump. “If you are only using a list of actual voters from past elections, you are missing a swathe of electorate particularly in some of these swing states who may be either first-time voters, or had been long-time non-voters who want to come back into the fold.”

It will take the committee of polling experts until May to determine what went wrong with the polling in Election 2016 and make recommendations on how the practice can be improved. But we don’t need to wait for the committee’s report to conclude that polling does not deserve the highly regarded status it now receives in our political elections. At the very least, more people – including the news media – need to become polling skeptics.

History has already taught us how America’s democratic process is hurt when voters make their election decisions using information from polls that are based on half-baked data. Perhaps CBS radio commentator Edward R. Murrow said it best when reporting on how the polls underestimated Dwight D. Eisenhower’s 1952 win. “Yesterday the people surprised the pollsters, the prophets, and many politicians. They demonstrated, as they did in 1948, that they are mysterious and their motives are not to be measured by mechanical means . . . . Those who believe that we are predictable . . . who believe that sampling depth, interviewing, allocating the undecided vote, and then reducing the whole thing to a simple graph or chart, have been undone again. And we are in a measure released from the petty tyranny of those who assert that they can tell us what we think, what we believe, what we will do, what we hope and what we fear, without consulting us – all of us.”

Murrow’s words about polling still ring true today.